Chemistry

Learning physics

and chemistry

easily and freely - Science for elementary school, middle school and

high school

Free online chemistry lesson for elementary school, middle school and high school.

Water

Boiling water

The essential

about boiling water

How hot is boiling water ?

This question seems to be rather simple but the answer depends on various factors.

Firstly it depends on pressure: boiling temperature is not the same at low pressure or high pressure.

It also depends on other substances contained in water.

How to make water boil ?

The ones who have ever cooked pastas know the answer: you only have to heat water enough to make it boil. In other words you must give to water, enough thermic energy in order to raise its temperature until a value called " boiling temperature ". Thermic energy provided to water allows its molecules to move faster and faster until they can separate and reach a gaseaous state.

Boiling temperature of pure water at ambiant pressure

The chosen water is pure because it doesn't contain other substances, particularly there are no minerals, that could modify the way water boils.

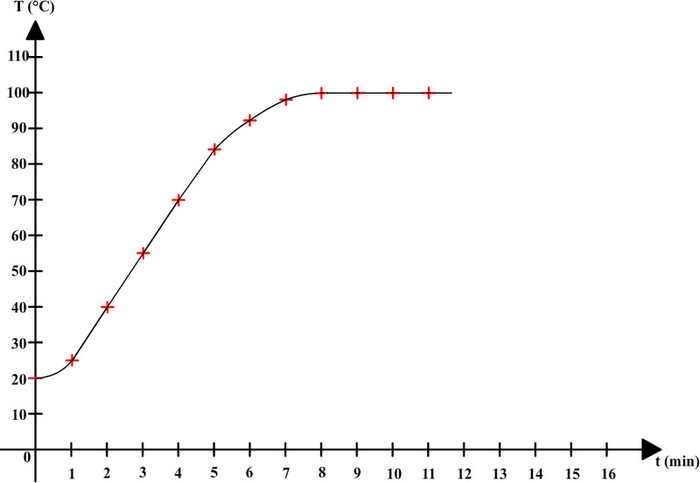

To make it boil, water is heated and then temperature is measured with a thermometer every minute.

Here are the results that can be obtained:

| Time (min) | 0 | 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 | 10 | 11 |

| Temperature (°C) | 20 | 25 | 40 | 55 | 70 | 84 | 92 | 98 | 100 | 100 | 100 | 100 |

These results can be used to draw a graph on which horizontal axis is time, and vertical axis is temperature:

Before boiling, temperature is rising and water remains liquid, but when the water starts boiling then it keeps the same temperature (100 ° C).

Pure water

boils at a constant temperature of 100 ° C.

Boiling temperature of pure water at low pressure

Pressure corresponds to a pushing force exerted by a gas or a liquid.

Pressure exerted by the air is called atmospheric pressure and at a given altitude it varies only slightly.

However, the pressure can be easily modified in a closed container.

At low pressure the boiling of pure water is slightly different:

The boiling temperature remains constant but has a value below 100 °C.

At a lower

pressure than normal atmospheric pressure, pure water boils at a

constant temperature below 100° C: The lower pressure

is, the lower boiling temperature is.

Boiling temperature of pure water at high pressure

Pressure affects boiling temperature of pure water: what changes will be obtained at high pressure ?

The boiling temperature remains constant but this time, it takes a value greater than 100 ° C.

At a higher

pressure than the normal atmospheric pressure, pure water boils at a

constant temperature above 100 ° C: The higher pressure is, the

higher boiling temperature is.

Boiling of salt water

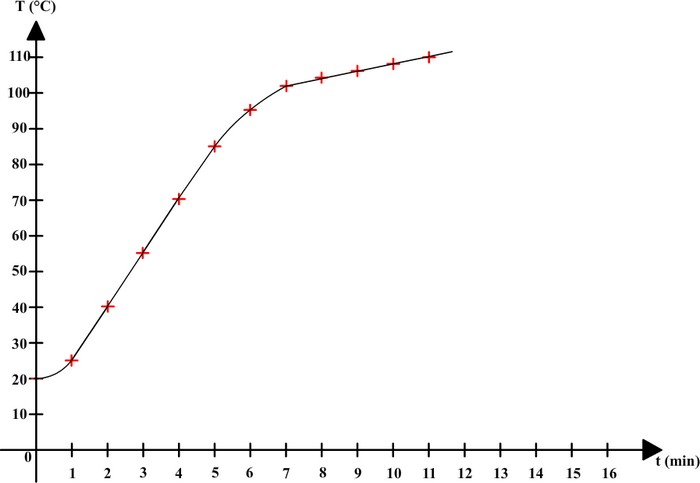

In order to study variations of temperature, salt water is heated and then temperature is measured with a thermometer every minute.

Here are the results that can be obtained:

| Time (min) | 0 | 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 | 10 | 11 |

| Temperature (°C) | 20 | 25 | 40 | 55 | 70 | 85 | 95 | 102 | 104 | 106 | 108 | 110 |

These results can be used to make a graph on which horizontal axis is time, and vertical axis is temperature:

This time, salt water has no constant boiling temperature. The temperature keeps on rising during boiling.

A mixture

doesn't have a constant boiling temperature: its temperature

increases during boiling.

Learn more

- Boiling water and cooking : This page from " What's cooking America " explains how to make water boil and gives boiling temperature of water for various altitudes

- How to boil water ? : An educative video that explain methods to follows in order to make water boil.

- Boiling point elevation: This article explains that substances dissolved in water raise its boiling temperature.

©2021 Physics and chemistry